We are heading towards GTC 2026, one of the most important events within the AI world, and this year, we are expecting a massive shift in how computing is perceived.

The race for AI infrastructure has evolved signifcantly over the past few years, as evolving compute requirements have forced companies like NVIDIA and AMD to innovate in what they offer. Since 2022, we have seen training workloads gain massive popularity, which Hopper and Blackwell capitalized on. Now, moving into 2026, agentic workloads are the next area to focus on for compute providers, which is why the upcoming GTC announcements from NVIDIA will be around them. You will see ‘agentic performance’ discussed a lot, and Team Green has strategically positioned itself.

NVIDIA-Groq Deal Set to Materialize With GTC 2026, Marking the First Indication Towards a Shift Away from GPU-Only Compute

We have managed to maintain a lead over NVIDIA’s Groq acqusition, being among the first to discuss how important the agreement is for the world of compute. Heading into GTC 2026, NVIDIA is expected to materialize its collaboration with Groq into an actual end-product, and one of the prospects we are looking to witness is a blend of Groq’s LPU units with NVIDIA’s Vera Rubin systems. It is expected that, with Vera Rubin, Team Green will offer a hybrid compute tray configuration featuring LPU units, allowing NVIDIA to capitalize on disaggregated inference.

There are various speculations about how LPUs would be integrated into Rubin systems, but we did discuss the possibility of LPU units available in 64, 128, and 256-unit configurations within an individual compute tray, linked to Rubin GPUs via NVLink Fusion. Jensen has already said the Groq agreement will play a similar role to Mellanox, indicating that LPUs will help NVIDIA complement workload stages, such as decode. Rubin CPX has already allowed NVIDIA to cover up prefill workloads, meaning the company has covered up two major stages of a traditional inference request.

There are a lot of specifics to discuss about how LPUs will play a role in Rubin’s architecture, but essentially, you are looking at a ‘platform’ shift with the upcoming architecture, where NVIDIA offers various configurations targeting specific workloads. This is why we say the company’s “GPU-only” approach has become a bit outdated, especially given how AI workloads are evolving.

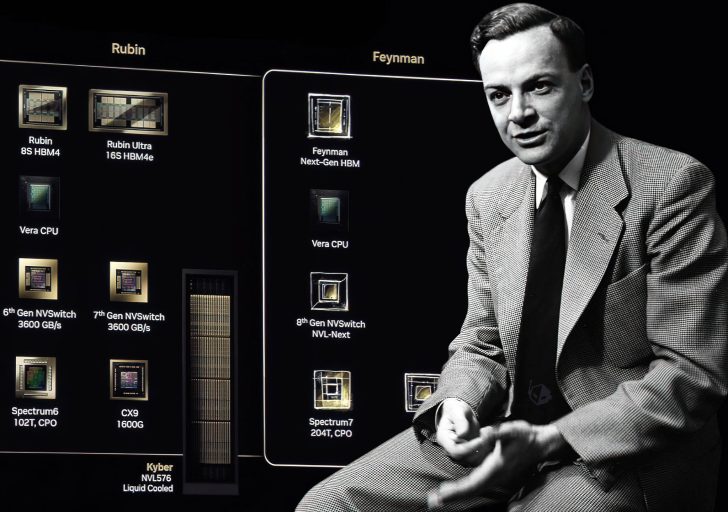

NVIDIA’s Next-Gen Feynman AI Chips, Diving Into 3D Stacking, 1.6nm Goodness & Immense Power

Since we have already seen Vera Rubin under full production, it is expected that NVIDIA will provide us with a deep dive into Feynman, the next generation of the AI architecture. Feynman was already discussed a bit with GTC 2025, but based on what we know about the lineup, one of the more significant aspects is that NVIDIA will actually depend on Moore’s Law this time to help them scale compute capabilities. It is claimed that Feynman will feature TSMC’s A16 process, and NVIDIA is expected to be an exclusive customer for the node, given how limited its use case would be for other customer segments.

Jensen has already talked about showcasing chips at GTC 2026 that were “never seen before”, and with Feynman, it is expected to be a major revamp in terms of the architecture design. The chip lineup is reported to feature TSMC’s hybrid bonding technology, likely using SoIC or EMIB, and one report also suggested that Feynman would use Groq’s LPUs in its true nature. We could see talks of LPUs being stacked onto the Feynman compute die, since it makes sense given how A16 provides space for front-side LPU connections.

There were rumors that NVIDIA is also considering Intel’s 14A process for its Feynman chips, but this hasn’t been confirmed yet. The above information makes one thing clear: Feynman will also mark a major shift in how NVIDIA approaches microarchitecture, which is why rack-scale solutions across generations will evolve at a similar pace. This confirms our narrative about GTC 2026 marking a ‘major’ shift in how computing is done.

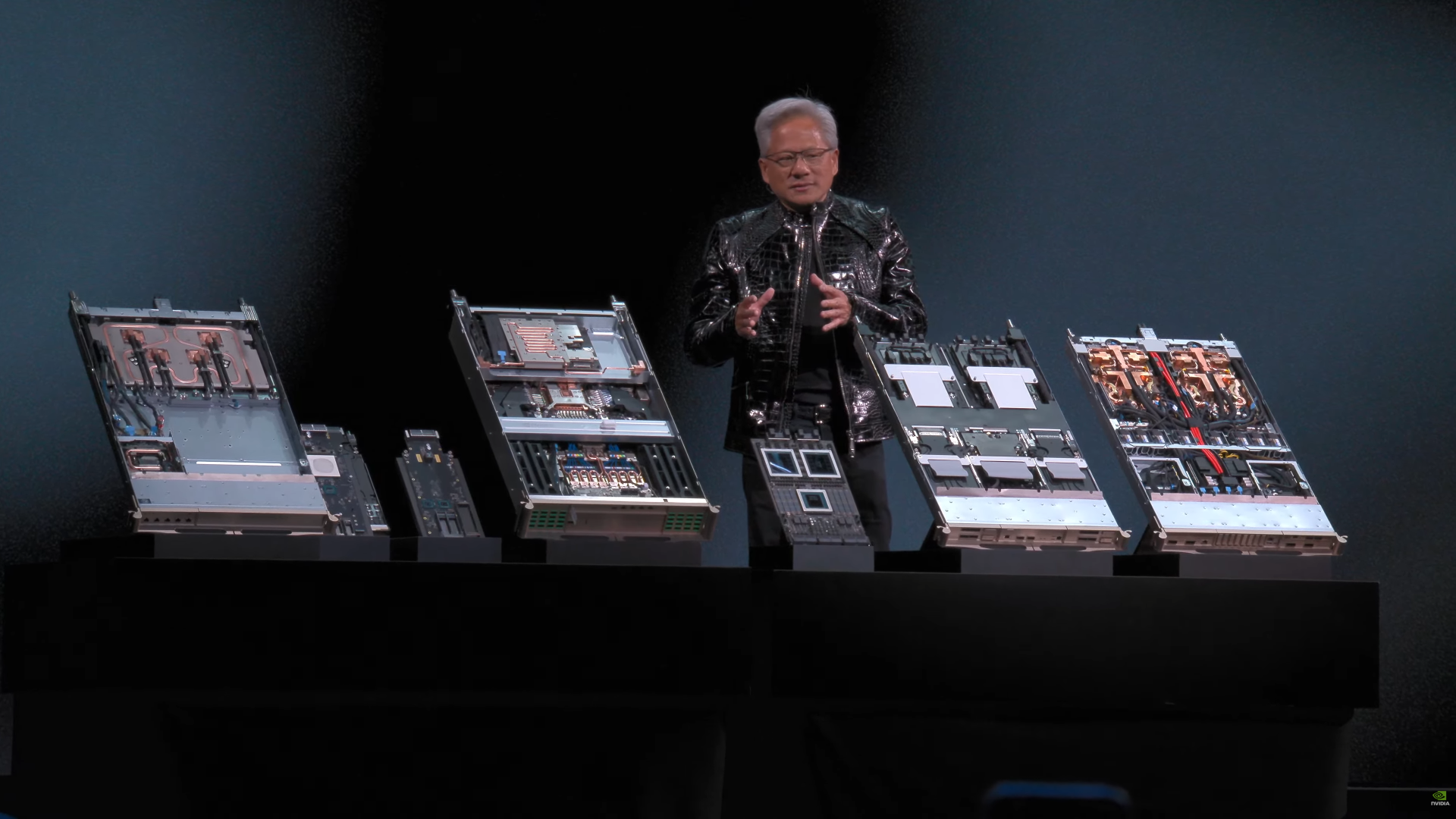

NVIDIA’s Vera Rubin AI Lineup: DGX NVL8, NVL72, Rubin CPX & the Mega NVL576 Rack-Scale Architectures

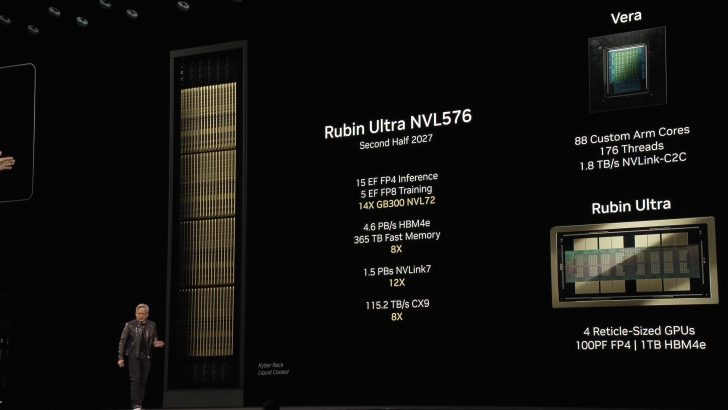

NVIDIA isn’t exactly done with Vera Rubin yet, as there is a lot to discuss as well. Back at CES 2026, Team Green showcased the NVL72 rack, featuring the 72-chip configuration, but it’s important to note that this is, for now, a baseline offering. NVIDIA is also looking to scale up to NVL144 and NVL576, but reports suggest we might not see the former, given the compute requirements NVIDIA has seen from its customers. We also saw NVIDIA unveil Rubin CPX, a context-focused rack-scale option for prefill, but there haven’t been many discussions about customer deployments.

Among all, the information around NVL576 is going to be one of the most interesting to watch, given that NVIDIA will shift to the new “Kyber” generation. NVIDIA will switch to stacking compute trays, mounted vertically, similar to books, called vertical blades with Kyber, along with an 800 VDC facility-to-rack power-delivery model. Based on what NVIDIA has told us in the past, NVL576 will be part of Rubin Ultra GPUs, where we are looking at an overhaul of chiplet configurations, which you can see in our previous coverage here.

NVL576 also opens a new front in how interconnects are viewed, which is why we might see a shift away from copper as well. With NVIDIA’s CPO (Co-Packaged Optics) switches, the idea is to overcome the thermal constraints of a 576-GPU configuration using copper. At the same time, massive upgrades in throughput, switching capacity, and latency are expected as we switch to CPO. We’ll discuss this approach in detail once NVIDIA provides more details at GTC, but for now, expect the company to offer the world a mega-rack solution based on optics.

I wouldn’t be surprised if there’s an NVL1,152 showcase at GTC 2026, but we’ll have to wait and see how the racks evolve. There’s a lot of spotlight to be taken by Rubin and Rubin Ultra, before Feynman comes, which is why Jensen will talk a lot about it. We are also looking at major CPU-focused announcements, including a collaboration with Intel.

NVIDIA’s GTC 2026 starts March 16, with Jensen’s keynote commencing at 11:00 AM PT. As always, we’ll be the first to talk about what’s being unveiled at the event, so make sure to keep a close eye on the website.

Follow Wccftech on Google to get more of our news coverage in your feeds.

First Appeared on

Source link

Leave feedback about this