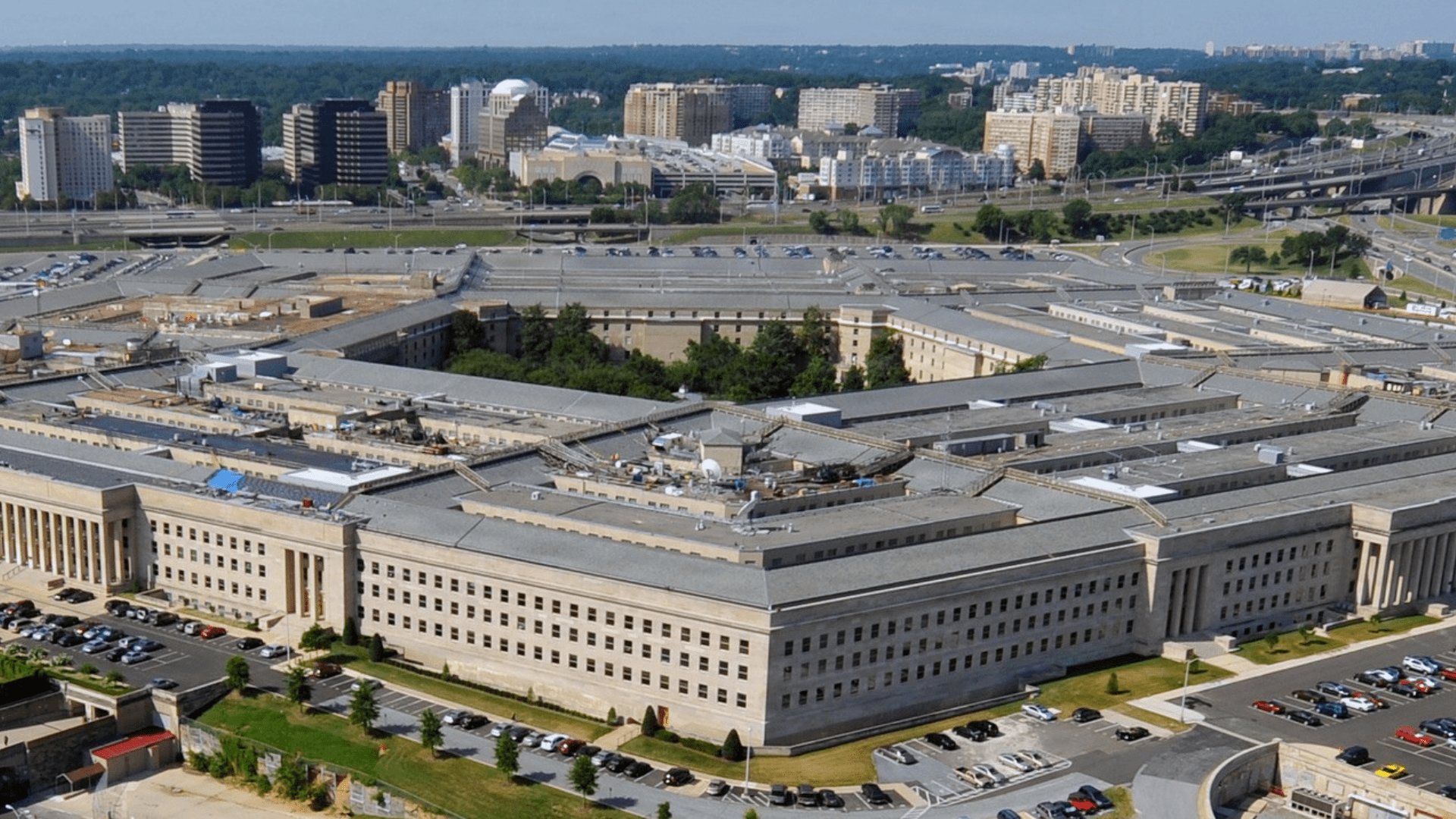

OpenAI’s hardware lead, Caitlin Kalinowski, said she has stepped down from the company after raising concerns about its recent agreement with the US’ Department of War. In a post shared on X, Kalinowski said the decision followed OpenAI’s move to deploy its AI models on the Pentagon’s classified cloud networks.

According to her, the company moved too quickly to finalize the arrangement without allowing sufficient time for broader internal and public discussion about the implications. Kalinowski noted that while AI can play an important role in national security, certain boundaries require far more scrutiny.

Surveillance of Americans without judicial oversight and the development of lethal autonomous systems without clear human authorization, she said, are issues that deserved more deliberation before the partnership moved forward.

OpenAI defends safeguards after Kalinowski’s resignation

The former hardware lead said she holds “deep respect” for OpenAI CEO Sam Altman and the broader team, but argued the Pentagon partnership was announced before clear safeguards had been defined. “It’s a governance concern first and foremost. These are too important for deals or announcements to be rushed.” Kalinowski wrote in a follow-up post.

OpenAI said the day after the agreement was announced that the partnership includes additional safeguards designed to limit how its technology can be used. The company reiterated on Saturday that its “red lines” prohibit applications such as domestic surveillance or the deployment of autonomous weapons.

In a statement to Reuters, the company said it understands that its work in this area can generate strong opinions and debate. It added that it plans to continue engaging with employees, government representatives, civil society groups, and communities worldwide as the conversation evolves.

Just over a week ago, OpenAI revealed its partnership with the Pentagon, following failed talks between the Department of War and Anthropic, which had sought safeguards to prevent its AI from being used for mass domestic surveillance or fully autonomous weapons.

Altman stresses human oversight and legal protections

After the company signed the agreement last week, Altman explained that the contract incorporates protections similar to those that were a point of contention in Anthropic’s negotiations.

The tech leader emphasized that two of OpenAI’s core safety principles – bans on domestic mass surveillance and ensuring human responsibility for the use of force, including in autonomous weapons systems – are reflected in the Pentagon agreement. He added that the Department of War agrees with these principles, has codified them in law and policy, and that the contract puts them into practice.

“We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only,” Altman wrote on X.

He added that the company is urging the U.S. Department of the Treasury to extend the same terms to all AI firms, arguing that these conditions should be acceptable across the industry. He added that OpenAI prefers resolving tensions through practical agreements rather than legal or governmental action.

According to media reports, Altman also told employees during an all-hands meeting that the government will let OpenAI develop its own “safety stack” to prevent misuse. He emphasized that if the AI model declines a task, the government would not compel the company to override that refusal.

First Appeared on

Source link

Leave feedback about this